In an internal memo, Apple’s vice president of Software Sebastien Marineau-Mes applauded the team’s hard work on Expanded Protections for Children. While assuring them of their valuable contribution in protecting children from predators, Marineau-Mes said that critics’ concerns are out of “misunderstandings” about the implications.

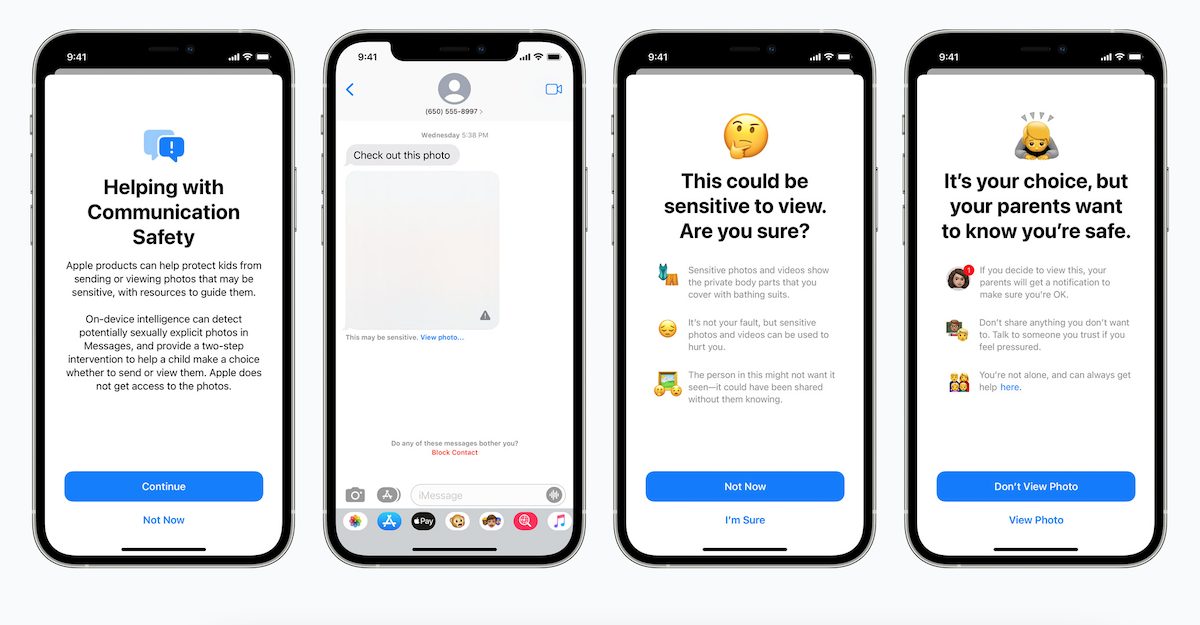

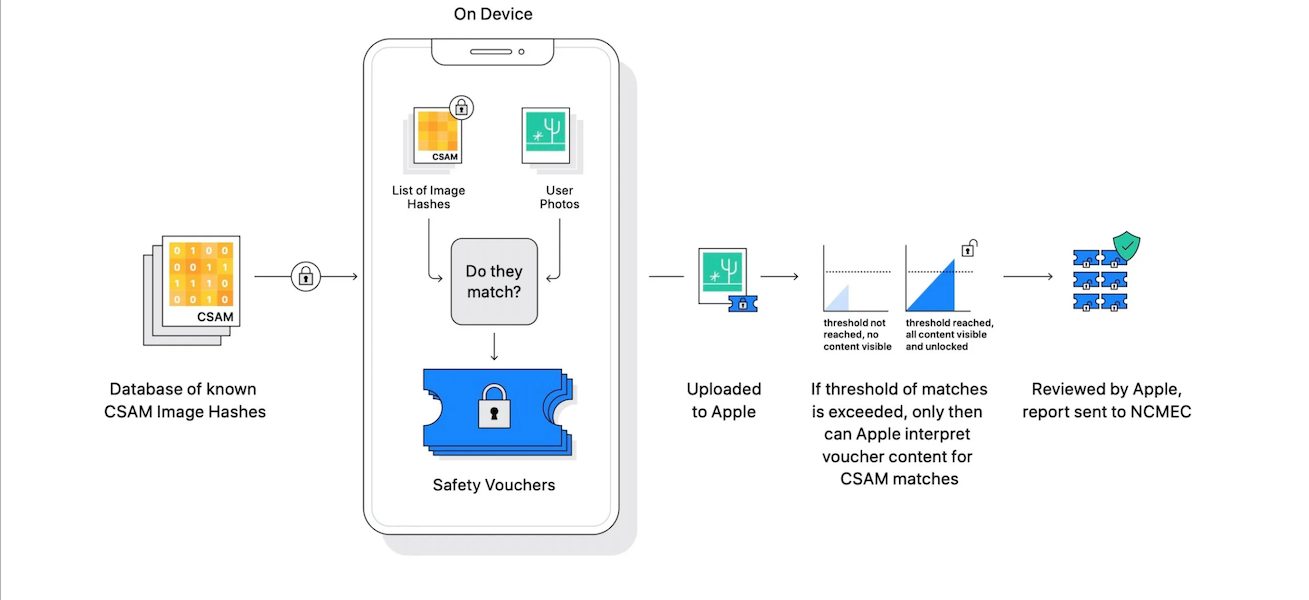

Recently, Apple unveiled the new child safety features which give the company power to analyze iMessages for sexually explicit images, scan iCloud Photos to detect CSAM content, and update Siri and Search to warn, intervene and guide children when searching for CSAM related topics.

The pushback has been strong against the new child safety systems. Cryptography experts argue that although these features are to protect children, they can easily be exploited by authoritarian regimes to access users’ messages and photographs and more importantly, puts their privacy in jeopardy.

No matter how well-intentioned, @Apple is rolling out mass surveillance to the entire world with this. Make no mistake: if they can scan for kiddie porn today, they can scan for anything tomorrow.

They turned a trillion dollars of devices into iNarcs—*without asking.* https://t.co/wIMWijIjJk

— Edward Snowden (@Snowden) August 6, 2021

Apple and NCMEC are proud of the new child safety systems, and call criticism “screeching” voices

To boost the spirit and morale of the team behind the new Expanded Protections for Children, Marineau-Mes appreciated their diligent effort in the memo. But at the same time trivialized critics’ concerns.

Today marks the official public unveiling of Expanded Protections for Children, and I wanted to take a moment to thank each and every one of you for all of your hard work over the last few years. We would not have reached this milestone without your tireless dedication and resiliency.

Keeping children safe is such an important mission. In true Apple fashion, pursuing this goal has required deep cross-functional commitment, spanning Engineering, GA, HI, Legal, Product Marketing and PR. What we announced today is the product of this incredible collaboration, one that delivers tools to protect children, but also maintain Apple’s deep commitment to user privacy.

We’ve seen many positive responses today. We know some people have misunderstandings, and more than a few are worried about the implications, but we will continue to explain and detail the features so people understand what we’ve built. And while a lot of hard work lays ahead to deliver the features in the next few months, I wanted to share this note that we received today from NCMEC. I found it incredibly motivating, and hope that you will as well.

I am proud to work at Apple with such an amazing team. Thank you!

As the company has implemented the new client-side photo hashing system to scan iCloud Photos for detection of CSAM on the mapping database provided by the National Center for Missing and Exploited Children (NCMEC) in the United States. The memo also included a message from NCMEC which shuns objection as screeching voices.

Team Apple,

I wanted to share a note of encouragement to say that everyone at NCMEC is SO PROUD of each of you and the incredible decisions you have made in the name of prioritizing child protection.

It’s been invigorating for our entire team to see (and play a small role in) what you unveiled today.

I know it’s been a long day and that many of you probably haven’t slept in 24 hours. We know that the days to come will be filled with the screeching voices of the minority.

Our voices will be louder.

Our commitment to lift up kids who have lived through the most unimaginable abuse and victimizations will be stronger.

During these long days and sleepless nights, I hope you take solace in knowing that because of you many thousands of sexually exploited victimized children will be rescued, and will get a chance at healing and the childhood they deserve.

Thank you for finding a path forward for child protection while preserving privacy.

via 9to5Mac

Read More:

3 comments