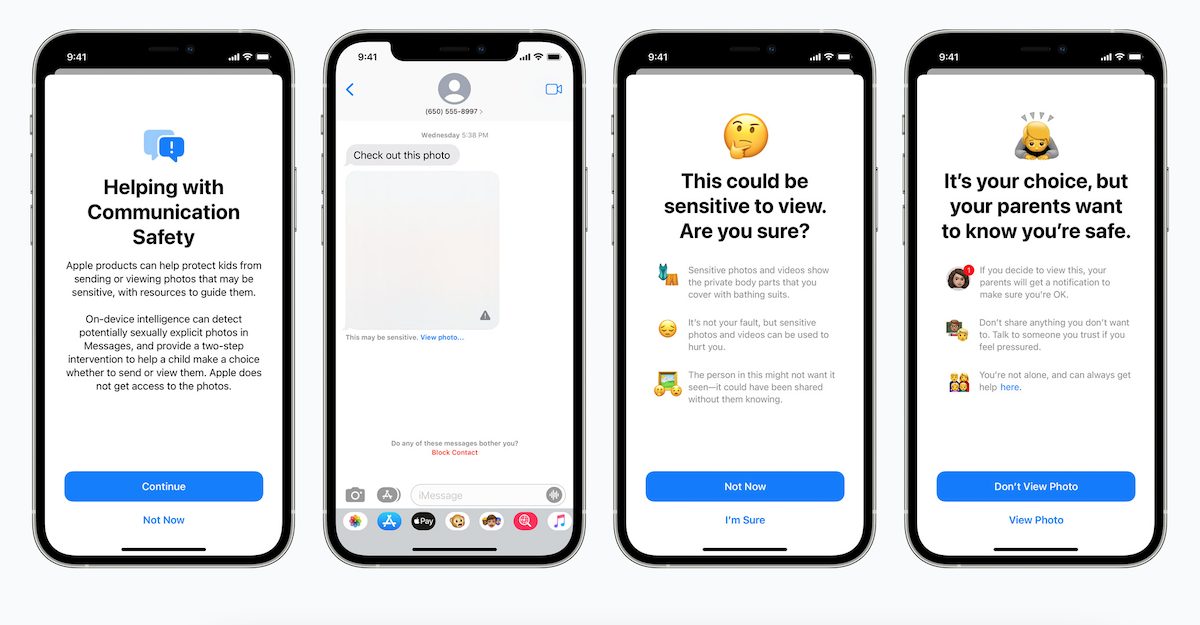

The Electronic Frontier Foundation has responded to Apple delaying the launch of its new child protection features which include communication Safety in Messages, CSAM detection, and expanding guidance in Siri and Search. While EFF is “pleased” Apple is listening to the concerns of activists and organizations around the globe, the group is urging Apple to drop its plan entirely.

EFF wants Apple to drop its CSAM plan completely

Apple recently announced it was going to “take additional time” to collect input and make changes before releasing these “critically important” child safety features within a few months. While EFF responded favorably to the announcement, the group believes delays are not good enough and Apple should abandon its CSAM plan entirely.

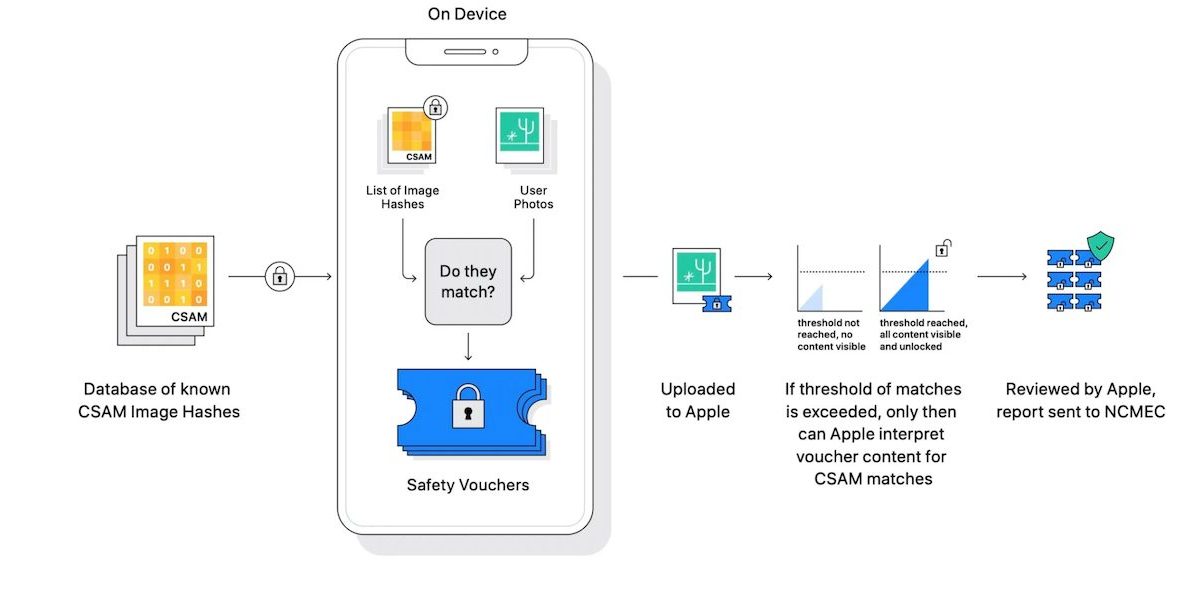

In a blog post, the digital rights group said it was “pleased Apple is now listening to the concerns” of users about the possible threat posed by its phone scanning tools. However, Apple “must go further than just listening, and drop its plans to put a backdoor into its encryption entirely.”

The group’s statement echoed the criticism Apple received from 90 organizations across the globe urging the company not to implement these features over concerns they could “lead to the censoring of protected speech, threaten the privacy and security of people around the world, and have disastrous consequences for many children.”

This week, a petition hosted by EFF demanding the Cupertino tech giant to abandon its plans reached 25,000. This, combined with other petitions by groups such as Fight for the Future and OpenMedia, add up to more than 50,000 signatures.

The enormous coalition that has spoken out will continue to demand that user phones – both their messages and their photos – be protected, and that the company maintain its promise to provide real privacy to its users

Currently, we do not know what changes Apple is planning on making to its CSAM features during the delay. However, it is clear the tech giant won’t see a lack of suggestions from critics up until it decides to launch the features.

Read more:

2 comments