Instagram has launched new safety features and resources exclusively designed for young users. The latest update of the app offers a new Parents Guide with information on safety tools and privacy settings, AI and ML technologies for age verification to show new age-appropriate features, and more.

Protecting young people on Instagram is important to us. Today, we’re sharing updates on new features and resources as part of our ongoing efforts keep our youngest community members safe. We’re also providing an update on our work to understand age in a way that helps keep people — especially young people — safe. We have dedicated teams focused on youth safety, and we work closely with experts to inform the features we develop.

New youth safety features on Instagram offer new technology to verify age, restrict DMs between teens and adults, and more

The social media company is working to make its platform safe for young users by pushing new age-appropriate features after verifying their age and providing a new guide for parents with tips and conversations for a safe online presence of their teenage children.

We believe that everyone should have a safe and supportive experience on Instagram. These updates are a part of our ongoing efforts to protect young people, and our specialist teams will continue to invest in new interventions that further limit inappropriate interactions between adults and teens.

Here is a list of new features and resources in the Instagram update:

- New Parents Guide compiled in collaboration with The Child Mind Institute and ConnectSafely in the U.S. The new guide is published in Argentina, Brazil, India, Indonesia, Japan, Mexico, and Singapore.

- New artificial intelligence (AI) and machine learning (ML) technology to stop under 13 users from using Instagram and verify young users’ age to confine their use to the following new age-appropriate features:

- Restrict Direct Messages between teens and adults by preventing adults from messaging users under 18 who don’t follow them.

For example, when an adult tries to message a teen who doesn’t follow them, they receive a notification that DM’ing them isn’t an option.

-

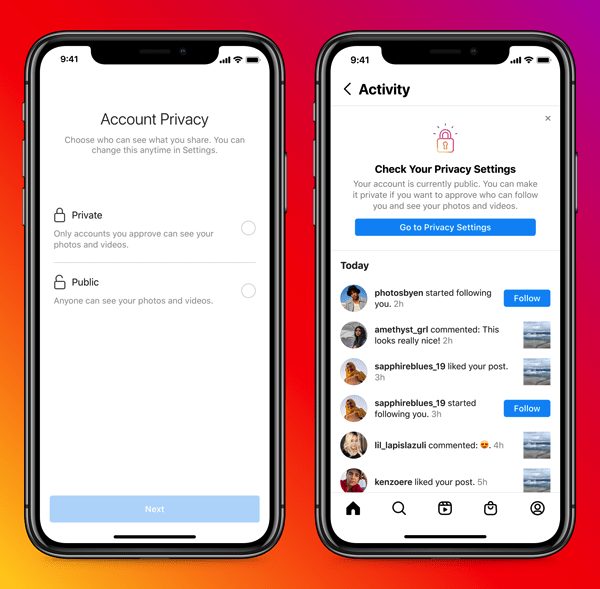

- Safety notices or prompts to make teens cautious of adults with suspicious behavior with whom they are already interacting in DMs.

For example, if an adult is sending a large amount of friend or message requests to people under 18, we’ll use this tool to alert the recipients within their DMs and give them an option to end the conversation, or block, report, or restrict the adult.

-

- Making it difficult for adults to find and follow teens by restricting adults from seeing teen accounts in ‘Suggested users’, discovering teen content, and automatically hiding their comments on teens’ pubic posts.

-

- Encouraging teens to keep private profiles when under 18 users sign up for an Instagram account to control who has access to their content and interacts with them. Teen account holders will be given information on different settings and the benefits of private accounts.

If the teen doesn’t choose ‘private’ when signing up, we send them a notification later on highlighting the benefits of a private account and reminding them to check their settings. This is just a first step.

These updates are gradually rolling out in select regions at the moment but the company says that they will available “everywhere soon”. In addition, Instagram also mentions that it will be including additional privacy settings in the coming months.

Read More:

1 comment