Apple’s latest “Shot on iPhone” feature showcases an unexpected application for the iPhone 14 Pro’s LiDAR technology. The video highlights how the device’s LiDAR scanner and TrueDepth camera are used to create personalized prosthetics for pets, transforming their lives in remarkable ways.

The heartwarming video, titled “The Invincibles,” was released on Apple’s official YouTube channel on Tuesday, unveiling a story of resilience, innovation, and compassion.

How Apple’s LiDAR technology gives pets a second chance

The narrative follows the journey of Trip, a canine who overcame adversity thanks to cutting-edge technology. Trip, adopted as a four-month-old pup, faced potential euthanasia due to a deformed leg. After undergoing surgery to amputate the leg, Trip embarked on life as a three-legged dog, with the promise of technological assistance.

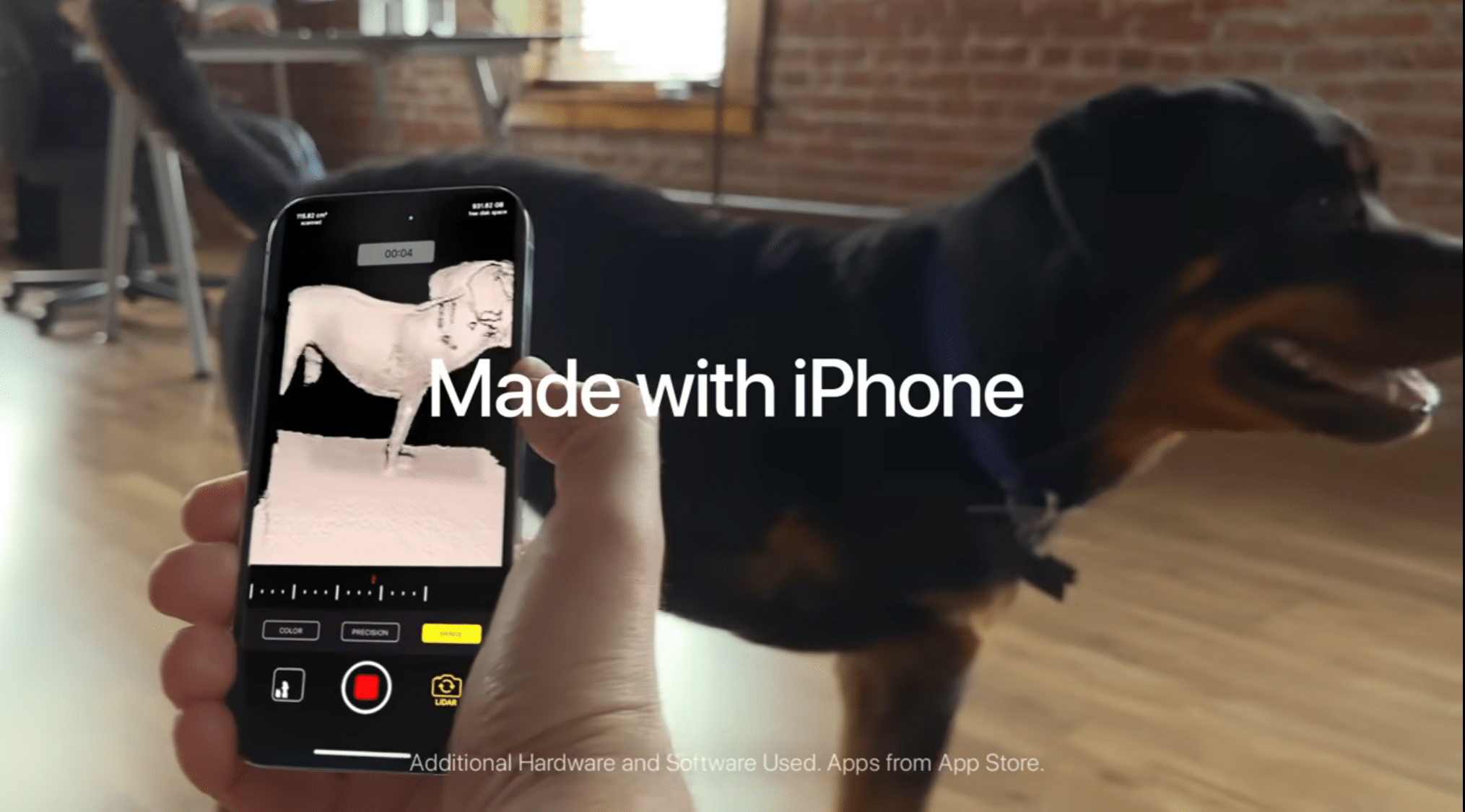

The breakthrough came through the collaboration with 3DPets, a pioneering small business specializing in tailor-made pet prosthetics. The process begins with a meticulous scan of the animal using the iPhone 14 Pro’s LiDAR scanning capabilities and TrueDepth camera. These advanced features capture precise measurements, essential for creating a mobility tool that perfectly suits the pet’s unique anatomy.

Once the digital model is complete, a customized prosthesis is meticulously crafted to mirror the pet’s form and function. The use of 3D printing technology ensures a precise fit and optimal comfort, setting the stage for a new chapter in the pet’s life.

The video showcases heartwarming scenes of Trip effortlessly running through fields and wading across rivers, embodying the triumphant spirit of the “Invincibles.” The emotional impact of the technology is further highlighted by the stories of other dogs who have undergone similar transformations. Some pets are equipped with wheeled bases, showcasing the versatility of the solution.

Beyond the technological breakthrough, the video introduces a refreshing departure from Apple’s conventional “Shot on iPhone” tagline. The phrase “Made with iPhone” takes center stage. Alongside this shift, the video introduces the hashtag #MadeOniPhone, a departure from Apple’s previous marketing strategies.

Apple’s LiDAR technology and its applications

Introduced to iPhone with the iPhone 12 Pro and iPhone 12 Pro Max, LiDAR is a remote sensing method that uses laser light to measure distances and create detailed 3D maps or models of objects and environments. It works by emitting laser pulses and measuring the time it takes for the pulses to bounce back after hitting objects. This information is then used to create highly accurate depth and distance measurements.

Apple uses LiDAR to enhance the following features:

- Augmented Reality (AR): LiDAR improves the accuracy and realism of AR experiences by providing more precise depth information. This helps virtual objects interact more realistically with the real world. For instance, AR apps can more accurately place virtual objects on surfaces, and virtual objects can better understand their surroundings and interact with physical objects.

- Camera improvements: In photography, LiDAR assists in creating better portrait mode effects by accurately distinguishing between the subject and background. This is because the depth data acquired by LiDAR helps in creating a more natural-looking depth-of-field effect.

- Improved low-light performance: LiDAR can assist with autofocus in low-light conditions, allowing for faster and more accurate focusing even when lighting is challenging.

- Spatial awareness: The precise depth information offered by LiDAR enables devices to have a better understanding of the user’s environment, potentially enhancing features like room measurements and object recognition.

- ARKit: Apple’s ARKit, a framework for building AR applications, integrates LiDAR data to provide developers with tools to create more immersive and interactive AR experiences.