A Union of Journalists in Germany, Austria and Switzerland (DJV) is urging the EU Commission, and Austrian and German federal interior ministers to take action against Apple’s upcoming CSAM detection feature. Fearing that the new scanning system will be used to access users’ contacts, photos, emails, and other private information, they accuse that Apple “intends to monitor cell phones locally in the future” which is a “violation of the freedom of the press.”

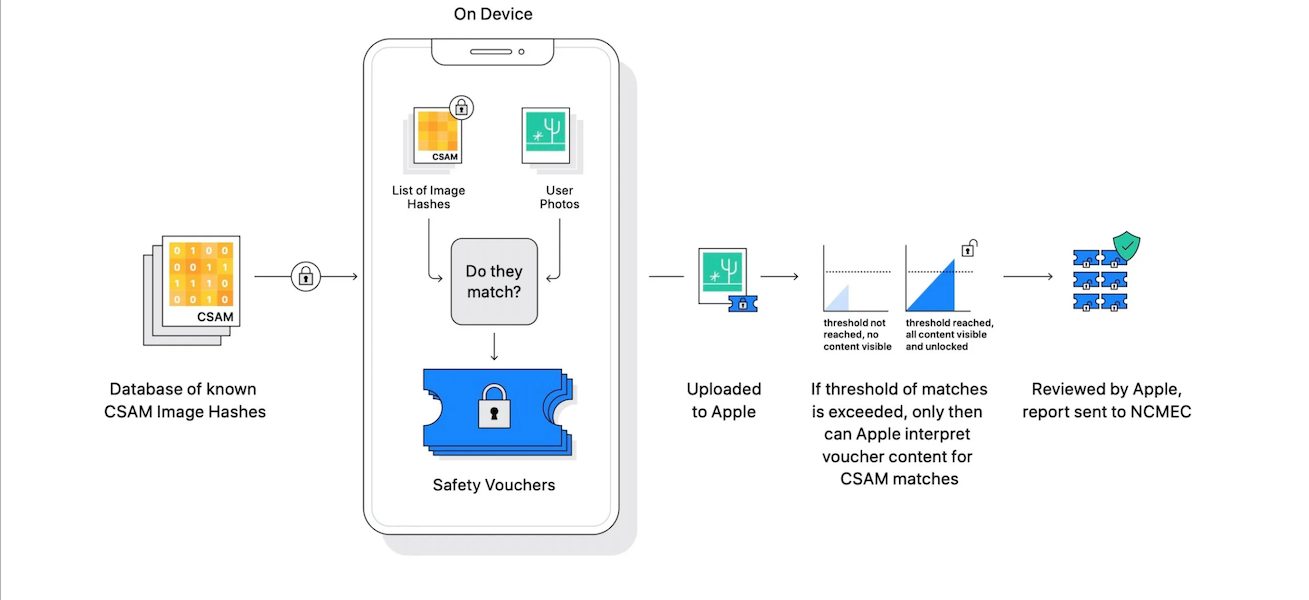

CSAM detection feature will be coming to iPhone in the Fall with iOS 15 and other updates to prevent the spread of child pornography. Using a NeuralHash system, users’ iCloud Photos will be scanned for known CSAM images and the matched results will be stored as safety vouchers. Only when a user’s safety vouchers exceed the limit of 30 CSAM images, the account will be flagged and eligible for human review. The reviewer will go through the images in the safety vouchers to determine false positives or genuine perpetrators, who will be reported to concerning law enforcement agency.

However, critics like the recent journalists are concerned about what else the new NeuralHash scanning system can be used for, especially by authoritarian governments.

DJV fears that Apple’s CSAM detection feature will roll out in Europe, after launching in the U.S

In the Union’s press release, titled “Surveillance versus freedom of the press”, Hubert Krech, spokesman for the public editors’ association AGRA said:

“In fact, this is also a tool with which a company wants to access other user data on their own devices, such as contacts and confidential documents. This is a danger to journalism and a clear violation of the European General Data Protection Regulation GDPR, the ePrivacy Directive and fundamental rights. Frank Everywhere, Federal chairman of the German Association of Journalists (DJV) considers the Apple plans only for the first step. “Will images or videos of opponents of the regime or user data be checked at some point using an algorithm?”

An ex-USA correspondent Priscilla Imboden from the Swiss media union SSM said:

Most European media have correspondents in the US and they have contacts there. In this respect, European users are very much affected. What begins in the USA will certainly follow in Europe as well. “All journalists have confidential content on their smartphones, that an American private company wants to judge the admissibility of content and also want to view and forward it.” This would also make investigative research much more difficult.

To address these concerns, Apple Head of Software and Privacy detailed the company’s implemented protective layers against such abuse. Craig Federighi accepted that Apple should have handled the announcement of CSAM detecting and Communication Safety in Messages in a better way to avoid confusion.

“I grant you, in hindsight, introducing these two features at the same time was a recipe for this kind of confusion. It’s really clear a lot of messages got jumbled up pretty badly. I do believe the soundbite that got out early was, ‘oh my god, Apple is scanning my phone for images.’ This is not what is happening.”

Read More:

2 comments