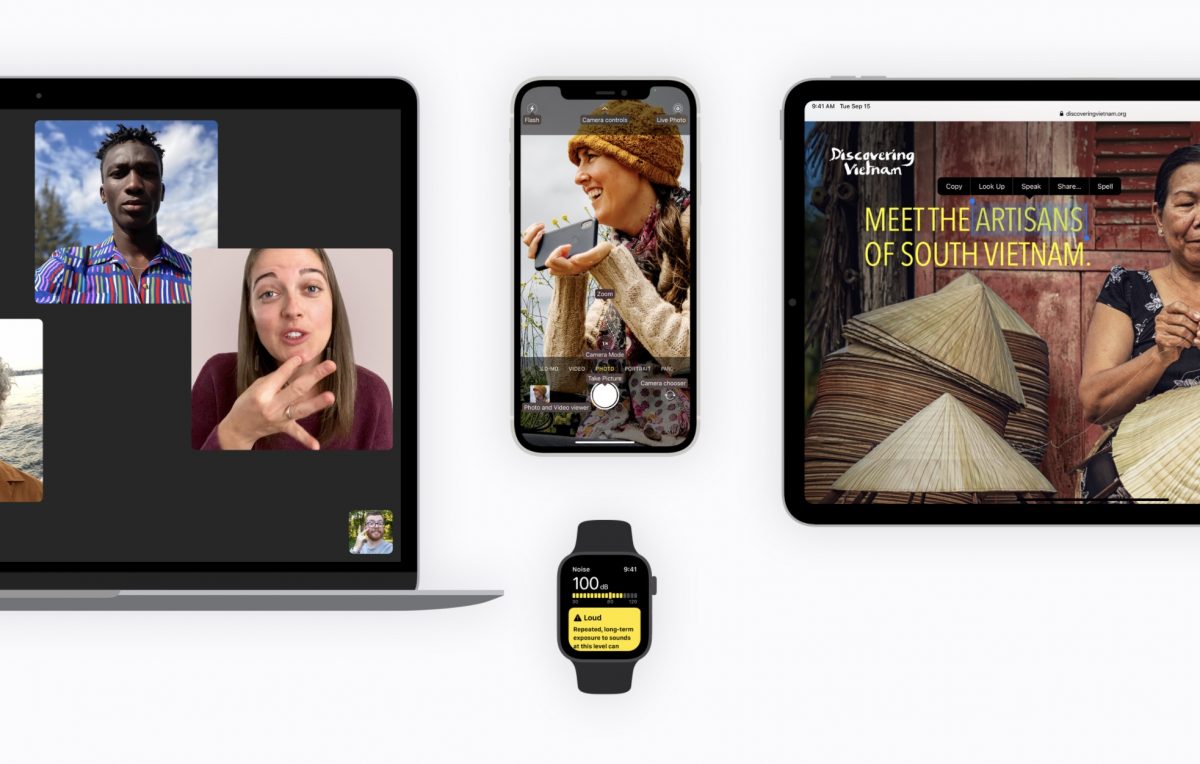

Apple introduced new ‘Screen Recognition’ technology on iOS 14 which automatically labels every button, tab, and slider to make it easier for blind users to operate an iPhone. Recently, Apple engineers sat down with TechCrunch to give a detailed insight into the company’s approach towards improvements in accessibility features.

Apple’s accessibility features in all its devices have helped individuals with visual and hearing impairments, for years, to not only operate the devices but also to easily carry out complex tasks like sending emails, web browsing, composing music, and more.

A new AR-enhanced ‘People Detection’ feature was released on iOS 14.2 and Apple Support app was updated with improved accessibility along with other features. VoiceOver is already an acclaimed Apple accessibility feature that enables musicians, lawyers, activists, and entrepreneurs with disabilities to carry on their work.

I thought I would share how I, as someone who is visually impaired use my iPhone.☺️ pic.twitter.com/wPI9smOIq0

— Kristy Viers (@Kristy_Viers) July 26, 2020

Engineers discuss improvements in Apple Accessibility features

Talking about improvements in Apple accessibility features from iOS 13 to iOS 14, Chris Fleizach, iOS accessibility engineer, and Jeff Bigham, AI/ML team member explained the philosophy behind the new Screen Recognition labeling technology. They said,

“We looked for areas where we can make inroads on accessibility, like image descriptions. In iOS 13 we labeled icons automatically – Screen Recognition takes it another step forward. We can look at the pixels on screen and identify the hierarchy of objects you can interact with, and all of this happens on device within tenths of a second.

It was done by taking thousands of screenshots of popular apps and games, then manually labeling them as one of several standard UI elements. This labeled data was fed to the machine learning system, which soon became proficient at picking out those same elements on its own.”

Bigham said that although the idea of Screen Recognition is not new, it has been perfected now due to advancements in technology.

“VoiceOver has been the standard bearer for vision accessibility for so long. If you look at the steps in development for Screen Recognition, it was grounded in collaboration across teams — Accessibility throughout, our partners in data collection and annotation, AI/ML, and, of course, design. We did this to make sure that our machine learning development continued to push toward an excellent user experience.”

This new intuitive capability will have a more profound impact by making apps more accessible for users with vision impairments. Currently, the new Screen Recognition capability is available on iOS and might be released on Mac in the future.

Read Also:

4 comments